The algebraic approximation gives good results when the points are all around the circle but is limited when there is only an arc to fit. In this case, to use ODR seems a bit overkill but it can be very handy for more complex use cases like ellipses. Optimize.leastsq is the most efficient method, and can be two to ten times faster than ODR, at least as regards the number of function call.Īdding a function to compute the jacobian can lead to decrease the number of function calls by a factor of two to five.

Here is an example where data points form an arc:Īrc_v5.png arc_residu2_v6.png Conclusion ¶ * as shown on the figures below, the two functions `residu` and `residu_2` are not equivalent, but their minima are close in this case. * `nb_calls` correspond to the number of function calls of the function to be minimized, and do not take into account the number of calls to derivatives function. This differs from the number of iteration as ODR can make multiple calls during an iteration. set_iprint ( iter = 1, iter_step = 1 ) # print details for each iteration lsc_out = lsc_odr. set_job ( deriv = 3 ) # use user derivatives function without checking lsc_odr. ODR ( lsc_data, lsc_model ) # beta0 has been replaced by an estimate function lsc_odr.

Model ( f_3b, implicit = True, estimate = calc_estimate, fjacd = jacd, fjacb = jacb ) lsc_odr = odr. Data ( row_stack (), y = 1 ) lsc_model = odr. mean () return xc0, yc0, r0 # for implicit function : # data.x contains both coordinates of the points # data.y is the dimensionality of the response lsc_data = odr. return df_3b/dx """ xc, yc, r = beta xi, yi = x df_dx = empty_like ( x ) df_dx = 2 * ( xi - xc ) # d_f/dxi df_dx = 2 * ( yi - yc ) # d_f/dyi return df_dx def calc_estimate ( data ): """ Return a first estimation on the parameter from the data """ xc0, yc0 = data. shape )) df_db = 2 * ( xc - xi ) # d_f/dxc df_db = 2 * ( yc - yi ) # d_f/dyc df_db = - 2 * r # d_f/dr return df_db def jacd ( beta, x ): """ Jacobian function with respect to the input x. return df_3b/dbeta """ xc, yc, r = beta xi, yi = x df_db = empty (( beta. Total running time of the script: ( 0 minutes 0.#! python # = METHOD 3b = method_3b = "odr with jacobian" def f_3b ( beta, x ): """ implicit definition of the circle """ return ( x - beta ) ** 2 + ( x - beta ) ** 2 - beta ** 2 def jacb ( beta, x ): """ Jacobian function with respect to the parameters beta. scatter ( X_train, y_train, s = 30, c = "red", marker = "+", zorder = 10 ) ax. predict ( X_test ), linewidth = 2, color = "blue" ) ax. scatter ( this_X, y_train, s = 3, c = "gray", marker = "o", zorder = 10 ) clf. normal ( size = ( 2, 1 )) + X_train clf.

subplots ( figsize = ( 4, 3 )) for _ in range ( 6 ): this_X = 0.1 * np. Ridge ( alpha = 0.1 ) ) for name, clf in classifiers. LinearRegression (), ridge = linear_model. seed ( 0 ) classifiers = dict ( ols = linear_model.

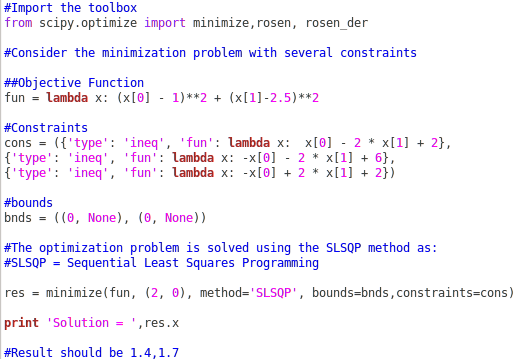

#SCIPY LEAST SQUARES CODE#

# Code source: Gaël Varoquaux # Modified for documentation by Jaques Grobler # License: BSD 3 clause import numpy as np import matplotlib.pyplot as plt from sklearn import linear_model X_train = np.